This means we take a negative number, raise it to the power of the logarithm of y (which will be positive), and then subtract this from our original calculation. We then multiply that value with `-y * ln(y)`.

How do you calculate cross-entropy loss?Ĭross-entropy loss is calculated by taking the difference between our prediction and actual output. We will then want to choose apple with a higher probability as it has less cross entropy lost than oranges. If cross-entropy loss is used, we can compute the cross-entropy loss for each fruit and assign probabilities accordingly.

#Cross entropy loss download

In order for our model to make correct predictions in this example, it should assign a high probability to apple and a low for orange. Download scientific diagram The dependence of the cross-entropy loss function of the algorithm and the model quality (accuracy) metric on the number of. Apple would have an 80% chance while Oranges will only get 20%. This is used to measure how accurate an NN is on a small subset of data points during. An example would be comparing the forecast of a short term vs long term prediction model making cross-entropy loss incurring large penalty when one class has much higher probability.Ī common example used to understand cross-entropy loss is comparing apples and oranges where each fruit has a certain probability of being chosen out of three probabilities (apple, orange, or other). Now, lets implement what is known as the cross-entropy loss function.

#Cross entropy loss series

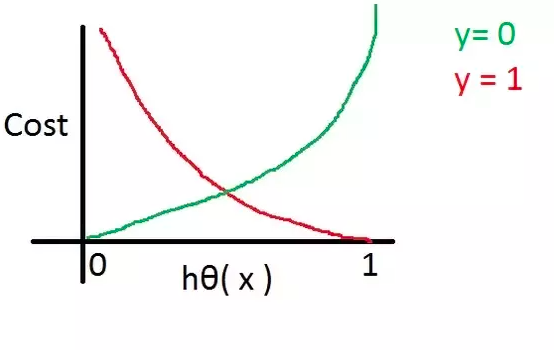

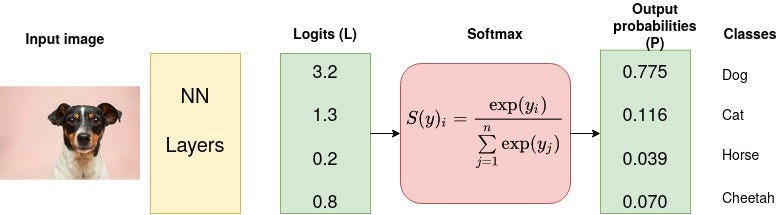

Log probabilities can be converted into regular numbers for ease of computation using a softmax function.Ĭross-entropy loss is also used in time series classification problems such as forecasting weather or stock values. They both measure the difference between an actual probability and predicted probability, but cross entropy uses log probabilities while cross-entropy loss uses negative log probabilities (which are then multiplied by -log(p)). The more confident model is about prediction, the less penalty it incurs.Ĭross-entropy loss is very similar to cross entropy. It calculates a probability that each sample belongs to one of the classes, then it uses cross-entropy between these probabilities as its cost function. The idea of this loss function is to give a high penalty for wrong predictions and a low penalty for correct classifications.

An example of the usage of cross-entropy loss for multi-class classification problems is training the model using MNIST dataset. It is also used for multi-class classification problems. Cross entropy loss is also called as ‘softmax loss’ after the predefined function in neural networks. The loss (or error) is measured as a number between.

Simply speaking, it is used to measure the difference between two probabilities that a model assigns to classes. Cross entropy loss is a metric used to measure how well a classification model in machine learning performs. Cross-Entropy Loss Function In order to train an ANN, we need to de ne a di erentiable loss function that will assess the network predictions quality by assigning a low/high loss value in correspondence to a correct/wrong prediction respectively. How do you calculate mean squared error loss?Ĭross entropy loss is used in classification tasks where we are trying to minimize the probability of a negative class by maximizing an expected value of some function on our training data, also called as “loss function”.How do you calculate cross-entropy loss?.Which is a very simple and elegant expression. For this we need to calculate the derivative or gradient and pass it back to the previous layer during backpropagation. sum ( exps ) Derivative of Softmaxĭue to the desirable property of softmax function outputting a probability distribution, we use it as the final layer in neural networks.